Overview

When loading from source systems that don’t yet have the SQL Server 2008 Change Data Capture (CDC) enabled, using a checksum calculation to determine if data has changed is a critical Data Warehouse load concept to understand. This will allow you to read the source, that can have dozens of columns, and with a single lookup, and a single compare (of a integer value) and determine if data within that vast source row has changed. Not to mention speed…. this method is much faster than a traditional compare of many fields. I have build a very simple example (3 columns in the source), will check if the data has changed in 2 of those columns, and perform the lookup/insert logic based on the outcome.

Setup

You will need to install the checksum transformation. [edit] –> as a result of this blog post, there were some comments about another module to create hash-values, also a worthwhile component to look at! Available from codeplex. <– [edit] I have included the sample tables, and data here.

The package

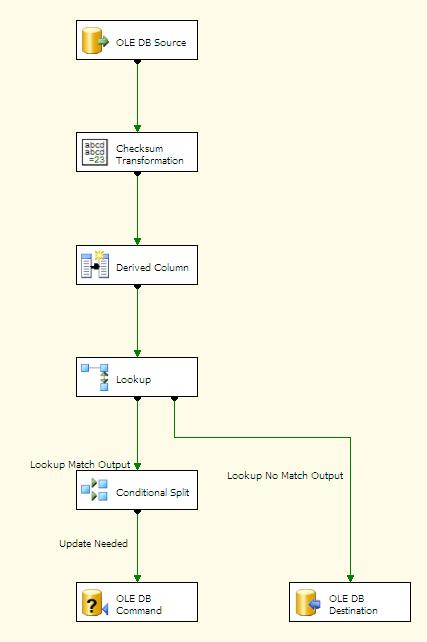

- OLE DB Source – reads the src table

- chksum transformation calculates the checksum

- derived column is setting rec_create_date and rec_mod_date with a getdate()

- Lookup is using name to find the row in the target, if it does not exist, insert otherwise return checksum from the database

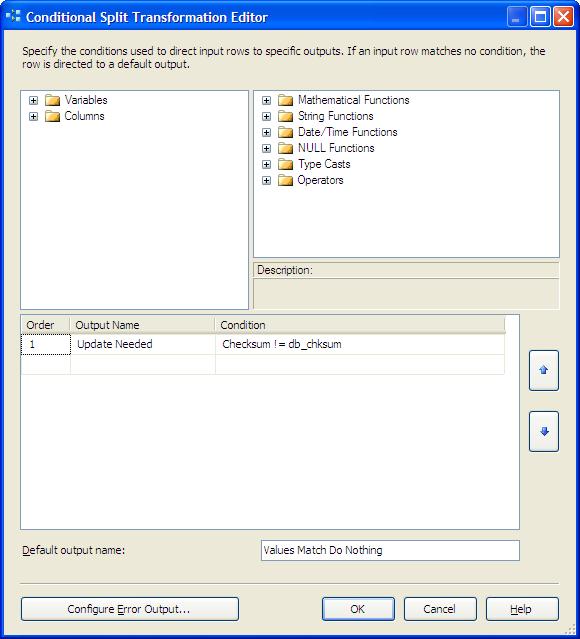

- conditional split, check to see if the calculated checksum (based on the source) matches the checksum from the lookup

- OLE DB Command, update the row (the checksum’s did not match)

- OLE DB Destination, insert a new row

Now a look a little closer at the main components of this package. The assumption here is that “fname” is the key value for the row, if that has changed, we want to insert a new row. If there is any change to the web_address, or email_address for this row we want to update the row. This will allow us to do a compare without having to worry case sensitivity, null values, varchar comparisons to multiple fields etc. Also, when you add an additional field to the source, all you need to do is add to the check-box here, and automatically all rows will be tested with the new column. The algorithm here is the default crc32, there is also a framework checksum (md5), and original. I would generally use the md5 version (although I forgot to change the default in this example), as, particularly with large calculations it will avoid a possible collision in the number.  The lookup is based on first-name and returns the value for the previously calculated checksum into db_chksum. Notice that the other fields from the databse are not being returned for the comparisons, we only need the checksum

The lookup is based on first-name and returns the value for the previously calculated checksum into db_chksum. Notice that the other fields from the databse are not being returned for the comparisons, we only need the checksum  The ENTIRE row (in this case 2 fields, but there is no limit to this) is now checked with one simple compare of the newly calculated checksum, and the checksum returned from the database. If nothing changed… nothing will be done…. if anything changed, update all the data in the row.

The ENTIRE row (in this case 2 fields, but there is no limit to this) is now checked with one simple compare of the newly calculated checksum, and the checksum returned from the database. If nothing changed… nothing will be done…. if anything changed, update all the data in the row.  The insert – simple (just note I do not map the rec_mod_date on the insert only the rec_create_Date) to allow us to understand when rows are inserted from the source vs updates. Normally fact loads also have additional audit info, but that is another topic.

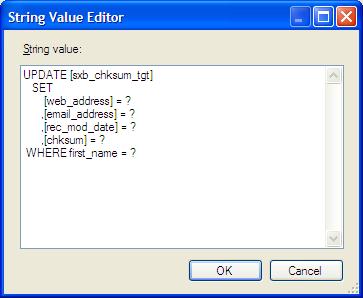

The insert – simple (just note I do not map the rec_mod_date on the insert only the rec_create_Date) to allow us to understand when rows are inserted from the source vs updates. Normally fact loads also have additional audit info, but that is another topic.  The Update – also simple (note that we are updating the whole row including the checksum value) The rec_create_date is not touched here, but rather the rec_mod_date is update to indicate the modifications.

The Update – also simple (note that we are updating the whole row including the checksum value) The rec_create_date is not touched here, but rather the rec_mod_date is update to indicate the modifications.

Putting it together

On the first run, the 1 row runs down the insert leg, and inserts a row with a checksum value of: 1409434363 If you run the package again, without changing data, nothing happens, because the calculated value on the next execution matches the value already in the database. Then…

UPDATE sxb_chksum_src SET web_address =

‘http://StefBauer.wordpress.com/’

Notice the only thing changed is the capitalization of the web address, but that is enough to create a new checksum value. Run again… and the checksum value from the input is -252803585 which does not match the database value, and the row runs down the update leg. You will now see the rec-mod-date update, the source values are updated, and the new checksum is stored as well.

You might want to also consider the Multiple Hash component (http://ssismhash.codeplex.com/) – it’ll make a bunch of different SHA (up to 512 bit) and MD5 hashes.

The same package run on 2 computers, each containing a simple data flow similar to your example – computes 2 totally different checksums (using the Original algorithm).

A few things to look at… I would recommend using the “extra crispy”-new recipe (not the original recipe) and see what results you get there, I have had issues with the original calculation, and have not seen any downside to the new version. Secondly, different computers may be the issue, I am not sure what it is using as the seed value when building the hash for the calculation. Thirdly, send a note to their support, I have found them to be VERY responsive and helpful. Last, but not least, you can check on codeplex.com there are several MD5 calculations that you could try to achieve the same process. Like I said, I have had great luck with the “new” calculation (there were issues with the original), so I would start there.

I am glad you are looking at the checksum solution!! It is an important skill to master, and it sounds like you are there!

I am finding the CRC32 algorithm not working as expected. It seems to be giving different checksum values at each run. What is weird, is this was not an issue in our dev environment however has been a problem since we moved to prod. Very strange.

I have seen a few other users have reported this same issue!

I have seen that behavior in a prior version. In the current version, I have had no issues with the new md5 type algorithm.

I tested this component using “framework checksum” because it is supposed to use a md5 algorithm. I expected to have a 32 characters string as a result, which is not the case with this component (the outpout is an integer). So I will use Multiple Hash component which is give me the expected result…Am I wrong?

I agree…. based on testing, other’s feedback (as well as your experience) I need to update this post, there are clearly some issues with this component. I stand by need to use (and understand) checksum processing for loading data…. I think the module I discuss has some issues. I have had good feedback about the http://ssismhash.codeplex.com/ Multiple Hash component you can get from codeplex. I need to do some additional testing before I post the changes, but you are clearly on the right track.

Thanks for the feedback!!!